Implement more memory model emulation toggles

The biggest performance hit with FEX’s x86 emulation has always been emulating the memory model of x86. ARM has added various extensions over the years to make this emulation faster but it still isn’t enough.

- FEAT_LSE - Adds a bunch of atomic memory instructions

- Original ARMv8.0 doesn’t support this. Massive impact on performance.

- FEAT_LSE2 - Adds unaligned atomics (within a 16-byte granule) to improve performance of x86 atomics.

- Doesn’t quite cover the full 64-byte cacheline of unaligned atomics that x86 supports

- FEAT_LRCPC - Adds new load instructions which match the x86 memory model

- FEAT_LRCPC2 - Adds even more loadstore instructions which match x86 instructions

- FEAT_LRCPC3 - Adds even more, including vector loadstore instructions

- No hardware today supports this extension

Even with this set of extensions, emulating x86’s memory model can have near a 10x performance hit. This performance impact is most felt in games because they use vector instructions very heavily, which is because of the lack of the FEAT_LRCPC3 extension.

With this in mind, we are introducing some sub-options around emulating x86’s TSO memory model to try and lessen the impact when we can get away with it. These new options can be found in the FEXConfig Hacks tag.

These two new options are only available for toggling when TSO emulation is enabled. If your CPU supports FEAT_LRCPC and FEAT_LRCPC2 then a recommended configuration is to keep the TSO Enabled option enabled, but disable the Vector and Memcpy options. While this will incur a performance hit compared to disabling TSO emulation, it is significantly more stable to keep TSO emulation on.

If you still need more performance, then it may be beneficial to turn off TSO emulation entirely. It’s unstable though! It’s incorrect emulation to gain speed!

Vector TSO enabled

This option enables emulating the memory model around vector loadstore instructions. This has a HUGE performance impact even on latest generation hardware.

Memcpy TSO enabled

This option enables emulating the memory model around x86’s REP MOVS and REP STOS instructions. These are used for doing memory copying and memory setting respectively.

The impact of this option depends heavily on the application. This is because most memcpy and memset functions actually use vectors to modify the memory.

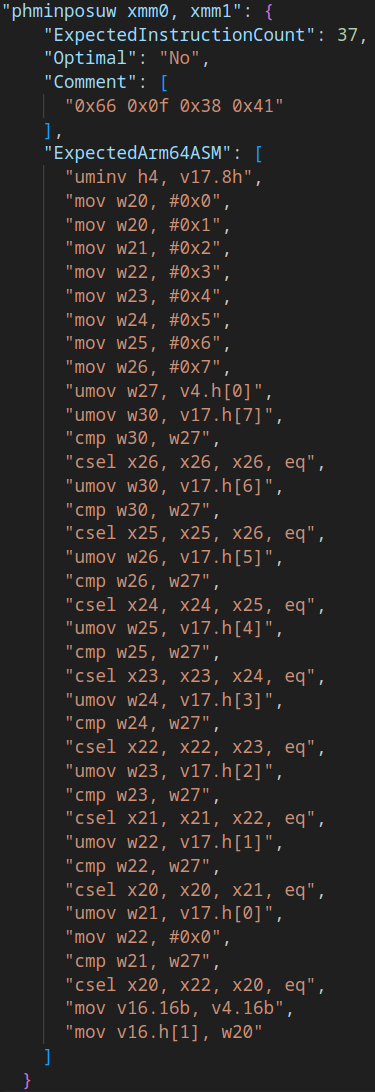

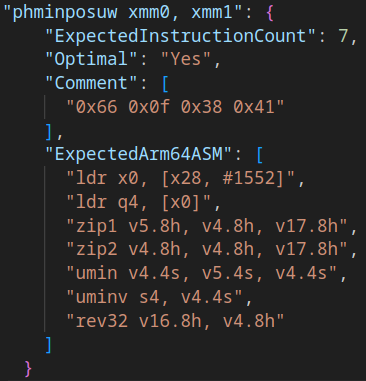

JIT core improvements

Once again this month there has been a focus on JIT optimizations. Although this time it might be hard to see what is improving. Overall in benchmarks there has been roughly a 3% performance improvement. With a mixture of improvements this month being foundational work to lower JIT compile time overhead in the coming months. As usual there is too much to dive in to each change individually so we’ll just have a list.

- Optimize LOOP/N/E

- Negate more inline constants

- Optimize PF calculation using integers rather than vectors

- Optimize CLC

- Optimize cmpxchg

- A bunch of instructions cleaned up and rewritten to remove small amounts of overhead

- Improves 32-bit address mode accesses

- Implements support for prefetch and rdpid instructions

Optimize memcpy and memset IR operations when TSO emulation is disabled

Speaking of the previous optimization. We have now optimized the implementation of the memcpy and memset instructions to be significantly faster. Sometimes a compiler will inline these instructions which was causing upwards of 5% CPU time doing memory copies.

With this optimization in place we have benchmarked and improvement from 2-3GB/s up to 88GB/s! That’ll teach that code to be slow.

Fix memory leaks in thread creation

A memory leak that has occured where FEX would leak some thread stacks when they shutdown. This has now been resolved which lowers memory usage for long running applications that shutdown threads. In particular this makes Steam consume less RAM.

We have more memory leaks to solve as we move forward but they are significantly less severe than this.

A ton of small cleanups in the code

This month has had a lot of code cleanup in FEX but these aren’t user facing so it isn’t very interesting. Let it be known although that something like half the commits this month were cleaning up various bits of code or restructuring which isn’t getting a focus.

Video game showcase

See the 2404 Release Notes or the detailed change log in Github.

]]>